Hello, hello there!

If you caught my last post, we dove into how to set boundaries around your flow metrics using natural process limits. We explored how these limits help you distinguish between normal variation and signals that something might be going wrong with your process.

Today, we’re taking it a step further by answering a critical question: How much data do you actually need to calculate a solid baseline for those limits? After all, detecting a true signal depends on having accurate limits, and that accuracy starts with a well-chosen baseline.

How Much Data Is Enough?

When calculating natural process limits, the size of your baseline dataset matters—a lot. You need enough data to capture the typical variation in your process without including outdated or irrelevant samples. So, what’s the magic number?

Here’s the rule of thumb: In most cases, you should use a baseline dataset of at least 10 but not much more than 20 points. Why this range, you ask?

Well, it’s all about avoiding two key mistakes:

- Mistaking signal for noise (acting on regular variation).

- Mistaking noise for signal (ignoring real issues).

The sweet spot—around 10 to 20 data points—gives you a solid foundation to minimize the risk of both. With 10 points in your baseline, the risk of a false alarm (treating noise as a signal) falls below 2.5%, which is generally acceptable in conservative analyses. Once you hit 20 data points, that risk drops even further, to below 1%.

Going beyond 20 data points? You can, but here’s the catch: Adding more data after 20 points doesn’t significantly reduce your chances of a false alarm. Each additional point has diminishing returns in terms of improving the accuracy of your process limits.

Should You Keep Adding New Data to Your Baseline?

Now, you might be wondering: “If I keep collecting new data, should I just keep adding it to my baseline?”

The answer is yes, but only to a point. If your process remains stable, you can continue to collect new data beyond 20 points without much risk. However, at some stage, older data points will become outdated, and that’s when it makes sense to let them drop off in favor of newer observations. As long as your rolling baseline remains at around 20 points, you can be confident you’re capturing an accurate picture of your process.

One more thing to consider: While 20 points is a good target, if you’re just starting out, you can still (technically!) calculate limits with as few as 3 data points. Keep in mind, though, that fewer data points mean less certainty in your limits, so treat those early signals with caution.

Now, if you’re looking for a way to put all of this into action without the hassle of manually calculating your process limits, we’ve got you covered.

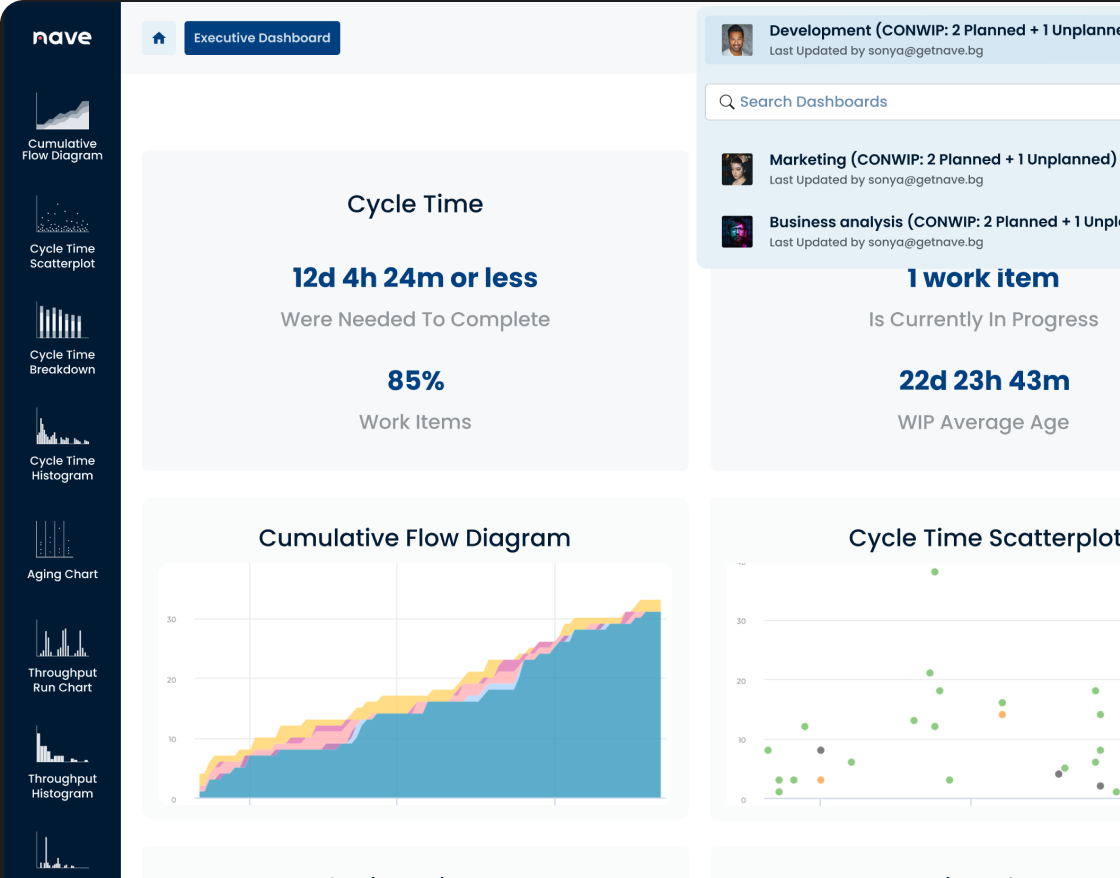

Our Process Improvement Dashboard is designed to do exactly that! It automates the entire process of gathering your data, calculating natural process limits, and identifying signals—so you can focus on making informed decisions, not crunching numbers.

At a glance, you’ll get instant insights into whether you’re dealing with normal fluctuations or something that requires your attention. No need to worry about false alarms or missed signals—our dashboard is built to help you stay on top of your flow metrics and continuously improve your process.

Next week, we’ll talk about when to update your limits and how to make sure your process stays stable as it evolves. Until then, keep calm, and let your data guide you!

Thanks for tuning in, and I’ll see you next Thursday, same time and place for more managerial insights.

Bye for now!

Source: Vacanti, D. “Actionable Agile Metrics for Predictability Volume II”